|

Your code which worked out of the box - Hell yes! - kinda indicates you know what I'm getting ready to say but it leaves me with a couple of questions: But I think we can make some good inroads and answer what you're looking for along the way.Īlso, I think theory first works best because others may not be at the same point. Just so you know, this is a massive topic and there is no way to discuss all of it here. *Although I'm not sure about the exact time complexity of your implementation, it's quite certainly worse than O(N^2), all_combination(21,4,2) took more than 5 mins.

Information Processing Letters, 5(1), 15–17 Constructing optimal binary decision trees is np-complete. Do note that since your time complexity is considerably higher than O(NlogN)*, your implementation will often find better splits than the scikit-learn's greedy algorithm. Split_cb = np.unique(dt.apply(X),return_counts=True)Īnd then use the same plotting code you use. split_cb in your code): X = np.array(x).reshape(-1,1)ĭt = DecisionTreeRegressor(min_samples_leaf=MIN_SIZE).fit(X,y) If you're curious how Scikit-learn's decision tree compare with the one learnt by your algorithm (i.e. groups with max_leaf_nodes, optimization w.r.t different loss functions using criterion etc. You can also control several other properties like maximun of no. To enforce a minimum number of points per group, use min_samples_leaf parameter. Good news is that you can directly use any well maintained decision tree implementation, DecisionTreeRegressor of ee module for example, and can be certain about obtaining best possible performance in O(NlogN) time complexity. Unfortunately, construction of an optimal decision tree is NP-hard, meaning that even with dynamic programming you can't bring the runtime down to anything like O(NlogN). It took me some time to realize, that the problem you're describing is exactly what a decision tree regressor tries to solve. Please let me know if I didn't make it clear in any part. Print('The minimum MSE value is '+str(min_mse)) Print('The best split of the data is '+str(split_cb))

Min_mse, split_cb, all_cb = best_piecewise(dataset, 2) Split_result = all_combination(lenth, i, k)

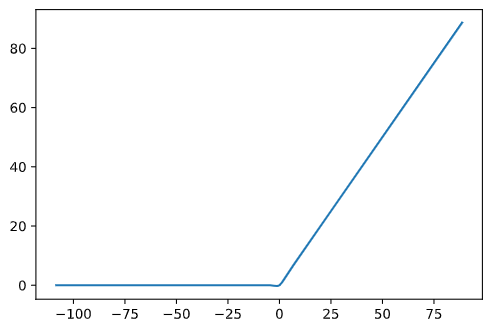

Print('There are not enough elements to split.')Ĭombination_bottom = Ĭombination_now = Ĭombination_last = combination_now.copy()Ĭombination_new = combination_new+1Ĭombination_now.append(combination_new.copy())ĭef calc_sum_error(dataset,cb):#cb = combination #n is the number of points, m is the number of groups, k is the minimum number of points in a group Lr_model = linear_model.LinearRegression() I think the algorithm runtime is O(n²) and I wonder if there are ways to optimize it to O(nLogN)? import numpy as npįrom trics import mean_squared_error The method should be using dynamic programming to calculate the different piecewise sizes and combinations of groups to achieve the overall MSE. I am trying to create a piecewise linear regression to minimize the MSE(minimum square errors) then using linear regression directly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed